Simpler Sensor Fusion

Sensor fusion is the problem of transforming data that

arrives from sensors, in a way that is useful for making decisions

or estimating values of desired variables. This sensor data could

come from many different sensors, or correspond to multiple readings

of a single sensor, or any combination. The problems appears

everywhere, including robot localization, map building, SLAM, target

tracking, counting people, GPS, and so on. (A classical name for

this area is filtering, which sometimes has confusing

connotations.) The key challenge is to design an information space

that can be incrementally updated as new sensor data arrives, yet is

sufficiently powerful for the desired task. In most problems

there is an underlying state space, in which the state at a given

time cannot be directly observed. The classical sensor fusion goal

is to estimate the state; however, a more interesting situation is

to estimate just enough about the state to be sufficient for a task,

such a counting the number of people in a room (rather than

calculating their precise positions).

Sensor fusion is the problem of transforming data that

arrives from sensors, in a way that is useful for making decisions

or estimating values of desired variables. This sensor data could

come from many different sensors, or correspond to multiple readings

of a single sensor, or any combination. The problems appears

everywhere, including robot localization, map building, SLAM, target

tracking, counting people, GPS, and so on. (A classical name for

this area is filtering, which sometimes has confusing

connotations.) The key challenge is to design an information space

that can be incrementally updated as new sensor data arrives, yet is

sufficiently powerful for the desired task. In most problems

there is an underlying state space, in which the state at a given

time cannot be directly observed. The classical sensor fusion goal

is to estimate the state; however, a more interesting situation is

to estimate just enough about the state to be sufficient for a task,

such a counting the number of people in a room (rather than

calculating their precise positions).

One of the most common representations is a probability distribution over a state space, which results in Bayesian filters (see this book for example). Within that category, the most useful method to data has been Kalman filters, which are optimal in a very narrow sense of linear Gaussian systems, but seem to work well more broadly. In our research, we develop more general representations that are more customizable to particular tasks. These are based on the notion of information spaces (which we call I-spaces) from game theory (von Neumann, Morgenstern, 1944) and control theory (Basar, Olsder, 1982). For an introduction to I-spaces, see Chapter 11 of my Planning Algorithms book or the "Minimalism in Robotics" tutorial on my tutorials page.

We have worked on this problem for over two decades. One key outcome is the introduction a new category of sensor fusion methods called combinatorial filters (or combinatorial sensor fusion). These are largely based on sets and functions, and are thus more general than Bayesian representations, which require measure-theoretic foundations, prior distributions, and many more modeling assumptions. Because they are simpler, combinatorial filters provide insight into how to develop simpler, more reliable robot systems. Furthermore, they often reduce the computational costs because they have less information to maintain. The methods directly address uncertainty that arises from the many-to-one mappings of states to sensor outputs, rather than focus on sensor noise (but both can be handled together). This carefully avoids many modeling burdens that are not absolutely necessary to the problem at hand. An overview of these concepts is provided by my (relatively short) book Sensing and Filtering.

Some highlights of the papers below are:

- Establishing the existence and uniqueness minimal filters (information transition systems) in an extremely general setting (IJRR 23). This work also establishes fundamental limits on planning and learning for robots.

- Robot localization using only few measurements (ICRA 23 and arXiv 22).

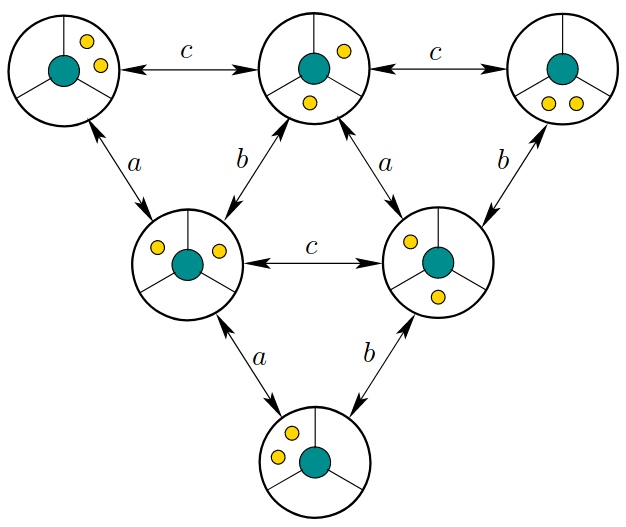

- The reduction of numerous tracking problems to a simple paradigm of obstacles and partially distinguishable sensor beams (ACM TSM 14). This paper explains the figure above.

- The introduction of shadow information spaces and corresponding combinatorial filters for counting and tracking moving targets (TRO 2012).

- The introduction of sensor lattices for comparing the power of sensors in a sensor fusion system based on the level of ambiguity of each sensor mapping. This is to help in the design of planning and filtering algorithms. (RoMoCo 19)

- Head tracking for virtual reality headsets, which is used in the Oculus Rift and many other consumer products (ICRA 14). We also developed a more advanced version, which takes into account human perception, but that is unfortunately patented.

Papers on Sensor Fusion

Localization with single or antipodal distance measurements. B. Ugav, S. M. LaValle, and D. Halperin. In N. Amato, K. Driggs-Campbell, E. Chinwe, M. Morales, and J. M. O'Kane, editors, Algorithmic Foundations of Robotics, XVI. Springer-Verlag, Berlin, 2025. [pdf].

Minimally sufficient structures for information-feedback policies. B. Sakcak, V. K. Weinstein, K. G. Timperi, and S. M. LaValle. In N. Amato, K. Driggs-Campbell, E. Chinwe, M. Morales, and J. M. O'Kane, editors, Algorithmic Foundations of Robotics, XVI. Springer-Verlag, Berlin, 2025. [pdf].

Optimal control of sensor-induced illusions on robotic agents. L. Medici, S. M. LaValle, and B. Sakcak. In Proc. European Control Conference, 2025. [pdf].

Equivalent environments and covering spaces for robots. V. K. Weinstein and S. M. LaValle. In M. Farber and J. Gonzalez, editors, Topology, Geometry and AI, pages 31-64. EMS Series in Industrial and Applied Mathematics, 2024. [pdf].

Separating intrinsic and extrinsic responses of whisker sensors using accelerometer. P. K. Routray, D. Subudhi, B. Sakcak, S. M. LaValle, P. Pounds, and m. Manivannan. IEEE Sensors Journal, 24(21):34635-34644, 2024. [pdf].

A mathematical characterization of minimally sufficient robot brains. B. Sakcak, K. G. Timperi, V. K. Weinstein, and S. M. LaValle. The International Journal of Robotics Research, 2023. [pdf].

Sensor localization by few distance measurements via the intersection of implicit manifolds. M. M. Bilevich, S. M. LaValle, and D. Halperin. In IEEE International Conference on Robotics and Automation, 2023. [pdf].

The limits of learning and planning: Minimal sufficient information transition systems. B. Sakcak, V. K. Weinstein, and S. M. LaValle. In S. M. LaValle, J. M. O'Kane, M. Otte, D. Sadigh, and P. Tokekar, editors, Algorithmic Foundations of Robotics, XV. Springer-Verlag, Berlin, 2023. [pdf].

Localization with few distance measurements. D. Halperin, S. M. LaValle, and B. Ugav. arXiv preprint arXiv:2209.04838, September 2022, [pdf].

An enactivist-inspired mathematical model of cognition. V. K. Weinstein, B. Sakcak, and S. M. LaValle. Frontiers in Neurorobotics, September 2022. [pdf].

Visibility-inspired models of touch sensors for navigation. K. Tiwari, B. Sakcak, P. Routray, Manivannan M., and S. M. LaValle. In IEEE/RSJ International Conference on Intelligent Robots and Systems, 2022. [pdf].

Sensor lattices: Structures for comparing information feedback. S. M. LaValle. In Proc. IEEE/IFAC 12th International Workshop on Robot Motion and Control, pages 239-246, 2019. [pdf].

Head tracking for the Oculus Rift. S. M. LaValle, A. Yershova, M. Katsev, and M. Antonov. In IEEE International Conference on Robotics and Automation, 2014. [pdf].

Combinatorial filters: Sensor beams, obstacles, and possible paths. B. Tovar, F. Cohen, L. Bobadilla, J. Czarnowski, and S. M. LaValle. ACM Transactions on Sensor Networks, 10(3), 2014. [pdf].

Counting moving bodies using sparse sensor beams. L. E. Erickson, J. Yu, Y. Huang, and S. M. LaValle. IEEE Transactions on Automation Science and Engineering, 10(4):853-861, 2013. [pdf].

Exploration of an unknown environment with a differential drive disc robot. G. Laguna, R. Murrieta-Cid, H. M. Becerra, R. Lopez-Padilla, and S. M. LaValle. In IEEE International Conference on Robotics and Automation, 2014. [pdf].

Planning under topological constraints using beam-graphs. V. Narayanan, P. Vernaza, M. Likhachev, and S. M. LaValle. In IEEE International Conference on Robotics and Automation, 2013. [pdf].

Counting moving bodies using sparse sensor beams. L. Erickson, J. Yu, Y. Huang, and S. M. LaValle. In Proc. Workshop on the Algorithmic Foundations of Robotics, 2012. [pdf].

Optimal gap navigation for a disc robot. R. Lopez-Padilla, R. Murrieta-Cid, and S. M. LaValle. In Proc. Workshop on the Algorithmic Foundations of Robotics, 2012. [pdf].

Sensing and Filtering: A Fresh Perspective Based on Preimages and Information Spaces. S. M. LaValle. volume 1:4 of Foundations and Trends in Robotics Series. Now Publishers, Delft, The Netherlands, 2012. [pdf].

Controlling wild bodies using discrete transition systems. L. Bobadilla, O. Sanchez, J. Czarnowski, K. Gossman, and S. M. LaValle. 2012. Unpublished manuscript, [pdf].

Shadow information spaces: Combinatorial filters for tracking targets. J. Yu and S. M. LaValle. IEEE Transactions on Robotics, 28(2):440-456, 2012. [pdf].

Story validation and approximate path inference with a sparse network of heterogeneous sensors. J. Yu and S. M. LaValle. In IEEE International Conference on Robotics and Automation, 2011. [pdf].

Minimalist multiple target tracking using directional sensor beams. L. Bobadilla, O. Sanchez, J. Czarnowski, and S. M. LaValle. In Proceedings IEEE International Conference on Intelligent Robots and Systems, 2011. [pdf].

Sensor lattices: A preimage-based approach to comparing sensors. S. M. LaValle. September 2011. Department of Computer Science, University of Illinois, [pdf].

Mapping and pursuit-evasion strategies for a simple wall-following robot. M. Katsev, A. Yershova, B. Tovar, R. Ghrist, and S. M. LaValle. IEEE Transactions on Robotics, 27(1):113-128, 2011. [pdf].

Cyber detectives: Determining when robots or people misbehave. J. Yu and S. M. LaValle. In Proceedings Workshop on Algorithmic Foundations of Robotics (WAFR), 2010. [pdf].

Sensor beams, obstacles, and possible paths. B. Tovar, F. Cohen, and S. M. LaValle. In G. Chirikjian, H. Choset, M. Morales, and T. Murphey, editors, Algorithmic Foundations of Robotics, VIII. Springer-Verlag, Berlin, 2009. [pdf].

Distance-optimal navigation in an unknown environment without sensing distances. B. Tovar, R Murrieta-Cid, and S. M. LaValle. IEEE Transactions on Robotics, 23(3):506-518, June 2007. [pdf].